I would like to dedicate this post to SSAS cube incremental processing since it is very interesting approach how proceed your data without a need to process all data in SSAS Cube or all even all data in SSAS database.

On large data volume systems, it could be very useful to do not re-process all data in cubes from the beginning, since with more data coming into your systems it could be very time consuming. Or there could be a request to display data in SSAS cubes more frequently and in production time could be problem to realize Full Processing.

Here I would like to demonstrate the incremental approach for data processing to SSAS cubes and some difficulties you can meet with and you should be aware of.

-

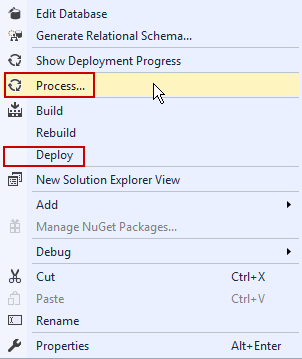

The main assumption is to have cube in processed state to have possibility to add data incrementally. There can be many reasons how to get cube to unprocessed state. Deploying new solution or Fully processed dimensions, when data changed (this will not be needed in scenarios that dimension does not change).

-

Another important thing is that the you should handle the data you are going to process on relation level to do not get duplicities. The data that were already processed in past should not be processed again by incremental processing.

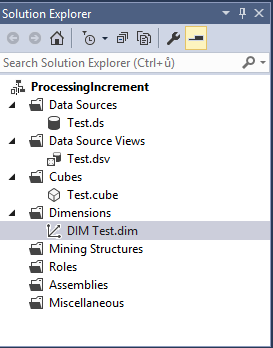

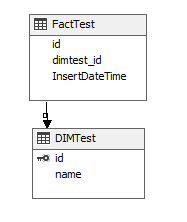

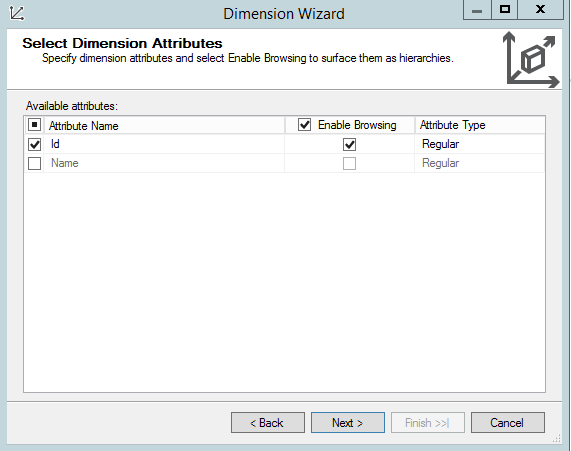

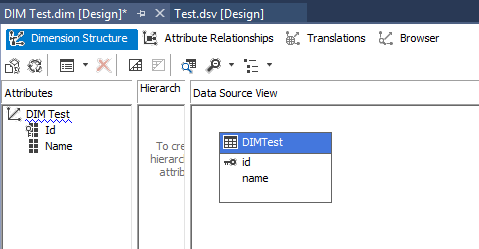

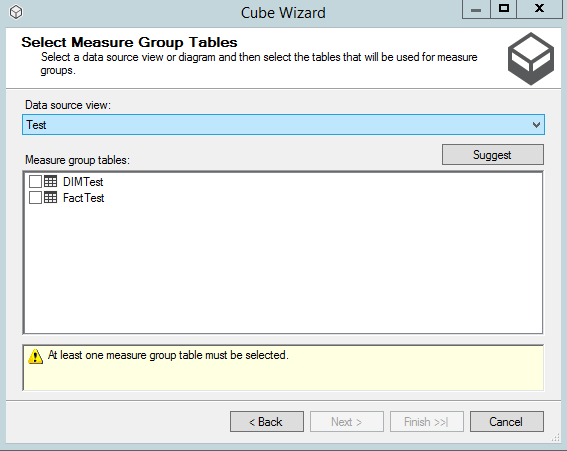

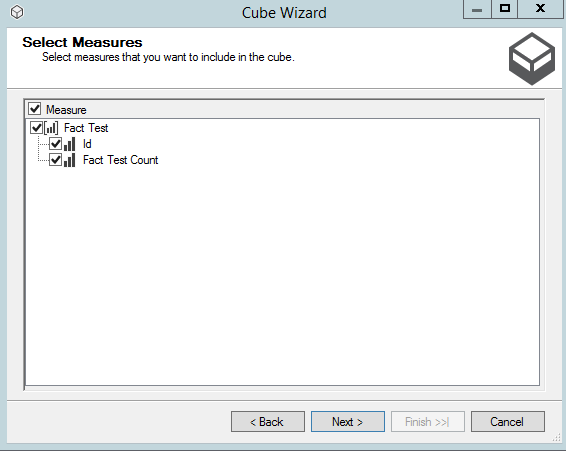

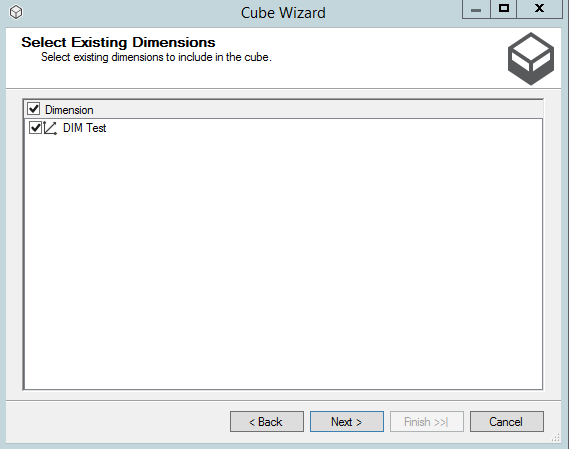

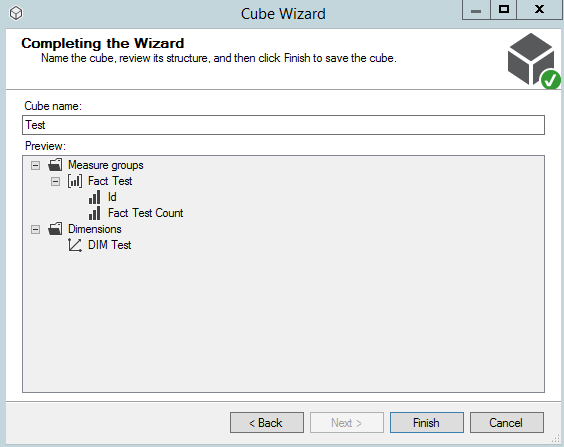

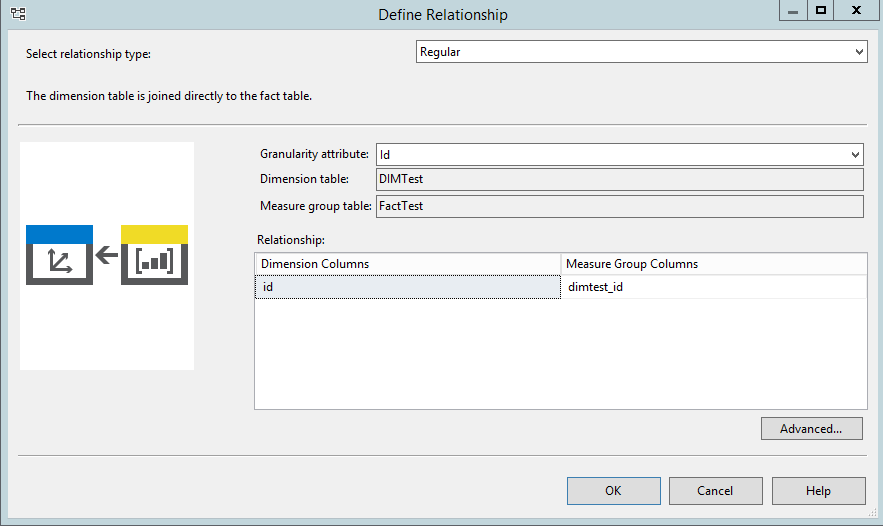

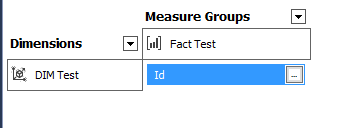

In my simple example I created

-

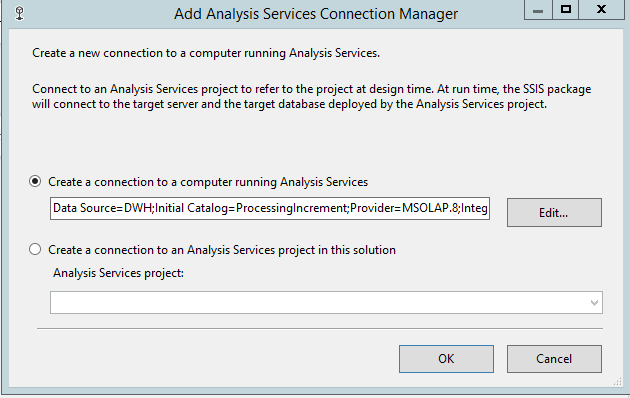

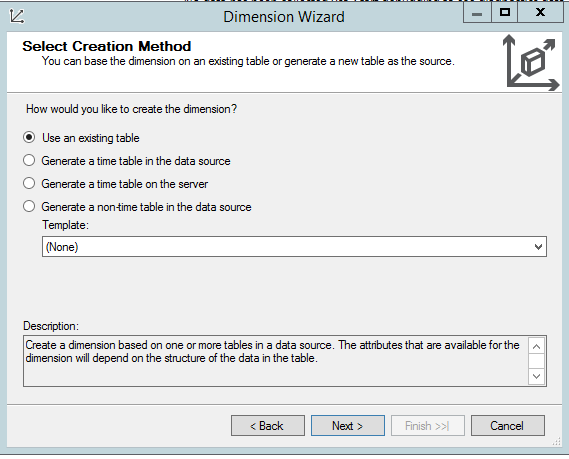

Relation database and SSAS database (see post here)

-

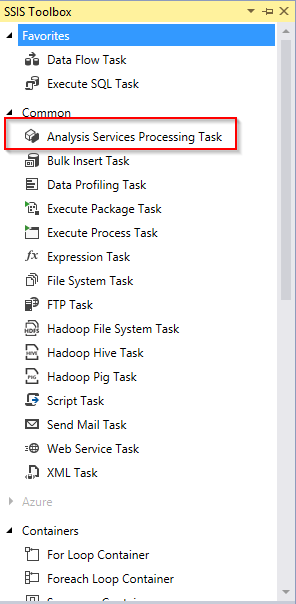

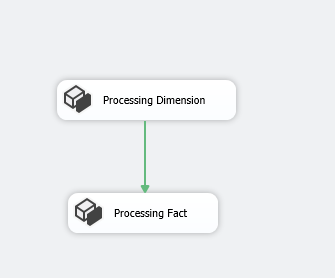

SSIS package for processing data (see post here)

All scripts could be downloadable here.

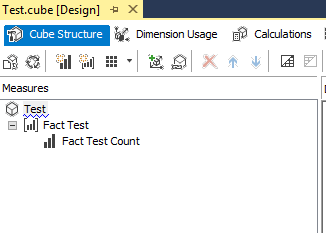

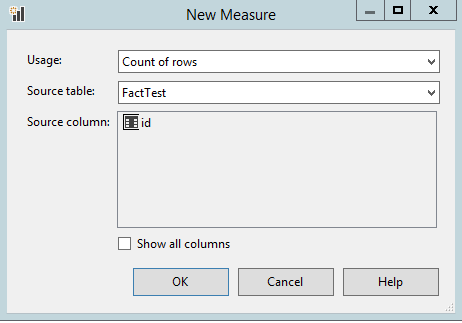

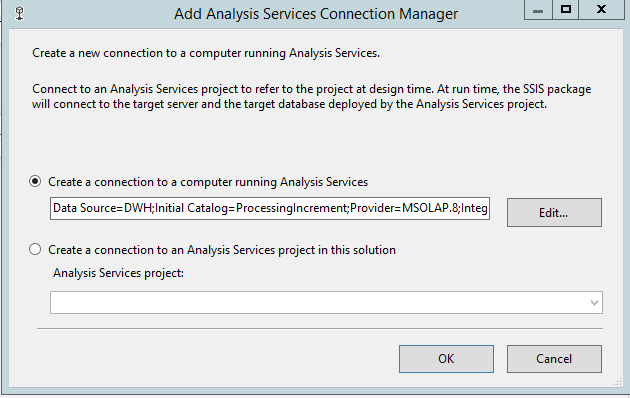

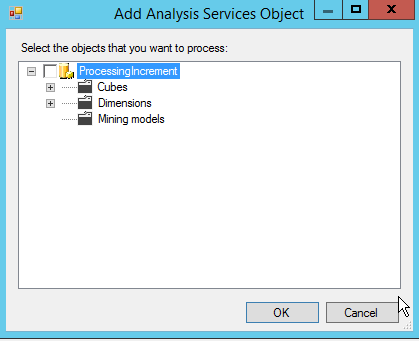

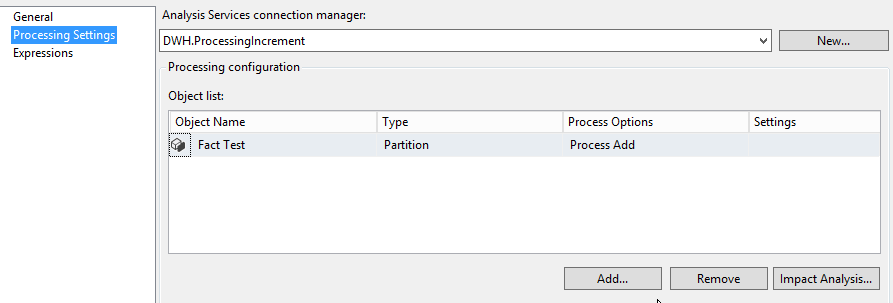

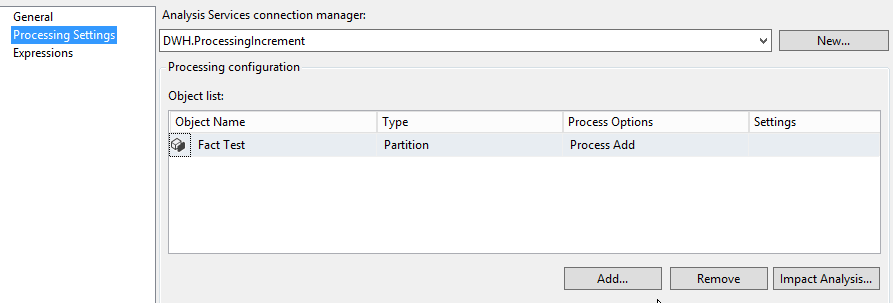

In processing option of fact table select Process Add for incremental processing (check it in SSAS package or if you are using Management Studio set it there). The other options will be explained and demonstrated in one of next post to complete an overview of SSAS processing options.

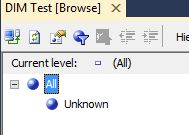

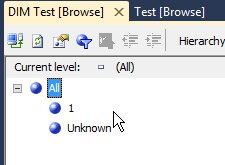

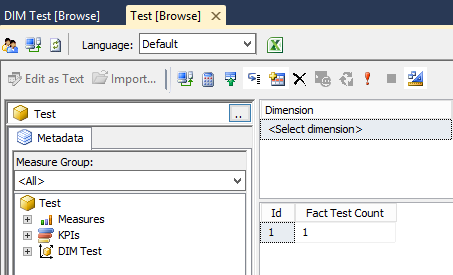

Process database with empty data source. See bellow that SSAS dimension is empty.

Now put data to dimension table and process it.

INSERT INTO dbo.DIMTest(name) SELECT 'Test'

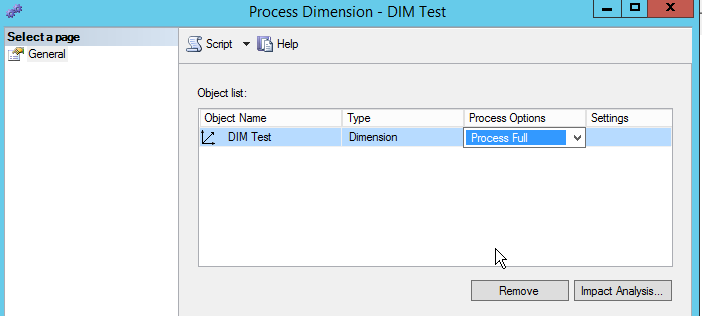

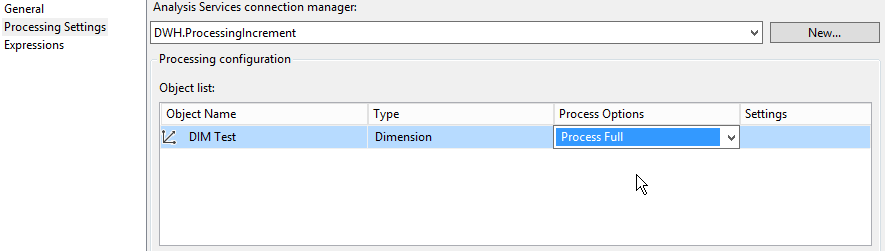

Change Process Options to Process Full because. If you leave Process Default it would not add the data to SSAS dimension, since Process Default cause the data processing when there was a change in dimension structures.

You should see some data in your dimension by Browse Dimension using Management Studio.

Change it in the SSIS package too.

Now let’s try our incremental processing. Put some data to our fact table.

TRUNCATE TABLE dbo.FactTest__Delta INSERT INTO dbo.FactTest__Delta (dimtest_id) SELECT 1 INSERT INTO dbo.FactTest(dimtest_id,InsertDateTime) SELECT dimtest_id,GETDATE() FROM dbo.FactTest__Delta

Process partition using Process add option. Now you see error.

Error: 0xC1140017 at Processing Fact, Analysis Services Execute DDL Task: Errors in the metadata manager. The process type specified for the Fact Test partition is not valid since it is not processed.–

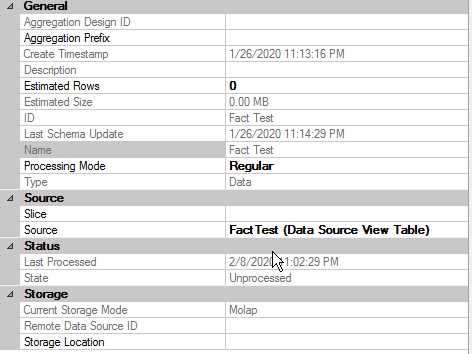

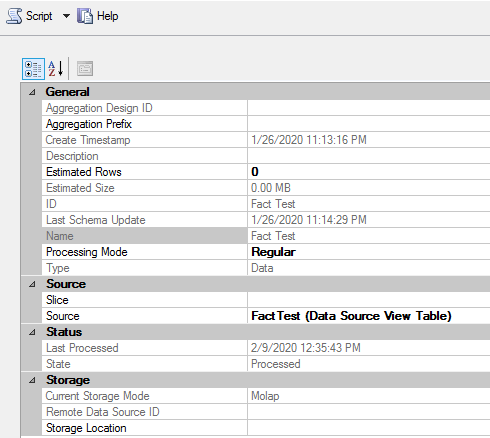

From message you can see that our cube partition is in some invalid state and we are not able to process it. Go to Management Studio, right click on partition or cube click on Properties.

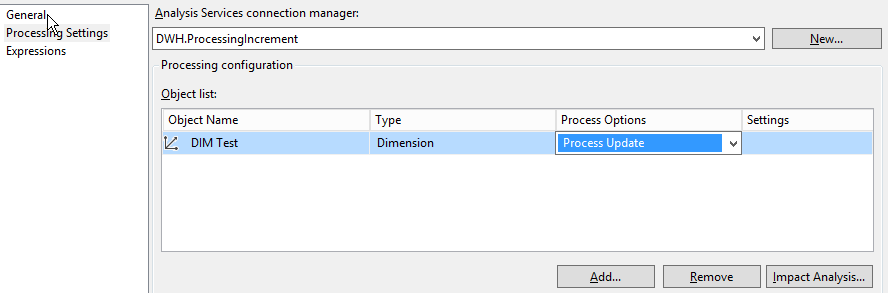

See that cube State property has Unprocessed state assigned. This can happen when you for example deploy new cube solution to the server. In our cases we did not manage any deployment. Another reason could be Full processing of dimensions. When you have changing data in dimensions like we have in our example you should process it, but with Process update options. It will not bring cube to Unprocessed state. Change this option in Management Studio and reprocess the cube/partitions.

Clean the data and repeat previous steps again with Process Options changed to Process Update.

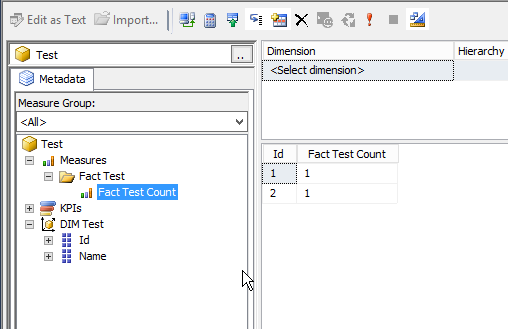

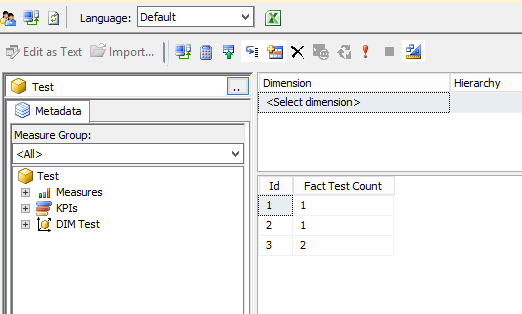

Reprocessing partition with Process Add option you should see data in cube. (Mangament studio -> Right click on Cube object -> Browse cube)

You can see that now the cube State stayed in Processed state.

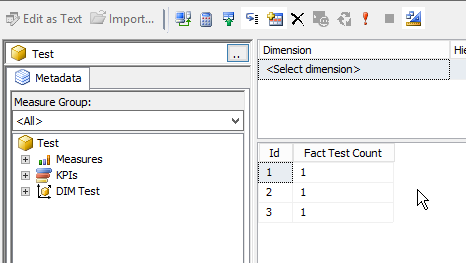

Put another data to dimension and fact table and processed it by created package. Everything works fine, the data were proceeded to the SSAS database.

INSERT INTO dbo.DIMTest (name) SELECT 'Test 2' TRUNCATE TABLE dbo.FactTest__Delta INSERT INTO dbo.FactTest__Delta (dimtest_id) SELECT 2 INSERT INTO dbo.FactTest(dimtest_id,InsertDateTime) SELECT dimtest_id,GETDATE() FROM dbo.FactTest__Delta

You can try to comment cleaning delta table to see what happened. You can see that the data were doubled. It is very important to handle delta table with data that were not proceed to cube.

Incremental processing is very nice and effective way to proceed data to your Data Warehouse and SSAS database. Of course it depends on your data characteristics, if it can be implemented or it there is a better way how to get data to your cubes. But here I would like to show you simple example how you can manage it trough SSIS package. And remember, you should take care of handling already proceed data and status of your SSAS cube.